On being Normal

(And still mostly wrong)

This post keeps a promise. I recently enthused about being bored, and was hugely gratified by Mat’s response:

Without doubt the least most boring post ever, so not boring that I laughed out loud in places.

My promise is now to tread on a topic “so mind-numbing that even database designers would prefer to lightly anaesthetise themselves with beer and watch rugby, rather than contemplate the sheer tedium of getting this one thing right.” How brave are you feeling?

But first, a caution

I hope it’s clear in my posts that I’m sequentially building a toolbox for dealing with reality. I’ve embraced being mostly wrong, and then boredom. Here I’ll provide a new tool. It’s a tool for building models of reality—and even computerising them in practice. But we can misapply every shiny new tool in two ways. The first is to misunderstand it; the second is to use it for absolutely everything.

About that second point … there’s almost no danger here. In contrast to the well-known “Brummie screwdriver” (pictured above1 and useful for any task :) the tool you’re about to acquire only seems intuitive, is pretty much universally applicable, and is used infrequently by most people because they just don’t get it! Which brings us to that first point. Understanding.

Everyone wants to be normal, right?

No. I’m not going to talk—for example—about how the United States of America is currently biting off its own limbs in a frenzied attempt to define who and what is ‘normal’ and who should instead be vilified. Nor am I going to preach a sermon on how, if you take a chicken from a flock and put a single red dot on its forehead, the other chickens will mercilessly peck it to death. Those topics are too raw, and too painful, and also too soon. I’m going to set my sights a lot lower, and merely talk about data normalisation. An austere topic. But just you wait!

Good science starts with problems—even database science which, heck, is often not that scientific. Fifty years ago, Ted Codd had a problem. Or rather, the IBM clients he dealt with had a problem. IBM itself was blissfully unaware, and remained so, even when he came up with a solution.

The issue was this: their shit was broken. It being 1970, they may not have phrased it quite like this in polite conversation, but their data was in a mess. Their data were in a mess. Whatever. Sometimes, when they updated a datum, they would find that there were several copies, and they missed one. The same hammer is being sold for two different prices! Now and then, when they inserted something into their data tables, it mysteriously became an orphan, not-joined-up correctly with the things that it should have been joined-up-correctly-to. Where is that new hammer? And on occasion, when they deleted something, a copy mysteriously persisted, a clone who refused to die; or even more mysteriously, hungry orphans appeared, and lay around on the streets of the soon-to-be-ravaged database. The revenge of the deleted hammer category. Things like that. Codd’s solution involves database normalisation.

So what is database normalisation?

Normalisation means re-structuring the relationships between data items to prevent the waste and errors that otherwise arise. It’s fundamentally about finding and using the right rules. Rules for good design. I like to assert that ultimately, all of this is simply about one rule:

Have just one source of truth.

If you have two watches, you never know what the time is. And you have to keep the two watches in synch. All the time. Rather just wear one watch.

When it comes to data, this only seems simple. The ramifications are profound. More rules bubble to the surface. And although pretty much everyone who gets a computer science degree will explore those ramifications in nit-picking detail, and be tested on dependencies and n-tuples and relational models and first-order logic and meta-schemata and anomalies and crows’ feet and Domain-key normal form and Brewer’s theorem and and and … they still won’t quite get it. Why?

I’m now going to make you a really good offer. You see, you don’t actually have to care about the ‘Why?’ If at any point in the following exploration you get bored, you can skip right down to the final section—titled “Normalisation is not about databases”. And you’ll still get the most important point. But me, I like the scenic route. Shall we begin?

Excel—Public Enemy Number One

Normalisation shouldn’t actually be difficult. The theory shouldn’t get in the way, either. But before they started working with database tables, pretty much every programmer picked up a copy of Excel. Or LibreOffice Calc. Or something like that. And they became good at it.

Actually, Excel only came out in 1985,2 but it had its conceptual origins in VisiCalc, which Dan Bricklin thought up in 1978 as a sort of “electronic blackboard” with “electronic chalk”. And there’s your problem. A flat table. A flat-table mind-set.3

The prototype VisiCalc had just 5 columns and 20 rows, but this was expanded to 63 × 254 when it was released as the ‘killer app’ for the Apple II in 1979. It was however soon ousted by Lotus 1-2-3 in 1983. Excel in turn dominated Lotus, and ultimately grew and grew.

With a modern version, it would seem that you can do pretty much anything. You can even relate data to other data in mildly sophisticated ways, for example using pivot tables (invented in 1993). You can link spreadsheets to other spreadsheets. Excel looks like an entire toolbox of infinite promise. There’s a bewildering array of functions. Excel is powerful. Excel can do big things. Wielded properly, Excel can even do decent things fairly well.

The problem arises when you want to do something big and decent and properly joined up. There are still entire countries where senior government officials would be data-crippled if you took away their copy of Excel. And people can die. There’s compelling evidence that part of the national clusterf—k that was the UK’s response to COVID-194 was due to incompetent use of Excel! In fact, Excel is your archetypal Brummie screwdriver. There are other tools, you know.

Ravaged by Loss of Relational Integrity

Let’s say you want to do a big, complex, joined-up thing in Excel (Don’t)! But you do. And you know the tool, right? So you end up building lots of flat spreadsheets (tables) that link to one another. When the number of tabs at the bottom becomes too great, you might even start allocating areas within a single spreadsheet to different tasks. And so on.

And then—despite your best attempts—you find that mysterious anomalies creep in:

=#REF!

You get warned about “circular references”. And so on. This is a microcosm of the problems that Codd’s customers encountered. The problem is that the tool doesn’t really suit the job. But there’s a bigger problem—and this is why people persistently choose the wrong tool. The Brummie screwdriver. Many bad choices stem from a lack of understanding of the right design principles. So let’s look at these. Briefly.

Count to 12, er, 13

Ted Codd was practical. He identified the problem, and proposed a solution. The problem is really “How do we build things so that there is just one source of truth?” The solution is to join things up right—but for this, we need a strategy. We need to make sure our tool fits the job.

So before we look at how to normalise data, let’s take a step back, and work through Codd’s strategic approach. It has 13 lucky rules, typically labelled “Rules 0–12” as that’s how we make computers count, too.5

These are practical rules, but if you’re starting to yawn at this point—remarkably—you can skip them entirely! Yep, you can still use the tool, even if you don’t know how it’s built. But do you trust the hammer maker, or will the head fly off when you wield it?

By the way, I’ve trimmed the explanations of the rules, to keep this short.

Rule 0: The foundation rule: You should not have to cheat. If your “Relational Data Base Management System” (RDBMS) is worth the name, you must be able to do everything below using just this.

Rule 1: The information rule: Everything must be stored right—as single values in rows in tables with columns.

Rule 2: Guaranteed access: Given the table, column and row, you can get the datum you want. Always. (Provided you have access privileges, of course).

Rule 3: Systematic treatment of null values: There is a ‘null’ (And this is an entire post on its own) that allows you to logically and systematically say “something is missing here”.

Rule 4: Dynamic online catalog based on the relational model: The RDBMS describes itself! Better yet, its own structure is built and accessible using the same rules (See Rules 1, 2 and 3).

Rule 5: The comprehensive data sublanguage rule: The RDBMS must have a built-in language that allows you to fully manage everything. An exposition of Rule 0. (This includes defining data, viewing it using ‘views’, manipulating it, checking that things join up right, access constraints, and managing ‘transactions’).

Rule 6: The view updating rule: However you look at a given datum, or data relationships, they must all look the same. Always. In theory and practice. This is actually a biggie!

Rule 7: High-level insert, update, and delete: Not only can you look at a datum: you can also consistently update it, delete it, or insert a new datum. This is the hot core of the RDBM, by the way.

Rule 8: Physical data independence: However you bugger around with the underlying physical storage of the data, the data look and behave the same. A deeper take on Rule 6.

Rule 9: Logical data independence: This is the biggest failing of how RDBMs are put together! What it effectively implies is decoupling between the “functional layer” that’s doing the work for anyone using the data, and the “information layer” that stores the data. Another huge topic on its own!

Rule 10: Integrity independence: You must be able to ensure both data integrity and relational integrity (proper “joined-up-ness”) based on Rule 5—more of this below. Unbreakable, if done right.

Rule 11: Distribution independence: Wherever you look at data, they look the same. An extension, if you wish, of Rules 2, 6, 8 and 9.

Rule 12: The nonsubversion rule: You must not cheat, using some low-level trick to get around the above rules.

(As an aside Greg Kremniz has an insanely good overview of these rules on Quora).

So help me, Codd

I’ve already spoken my one rule—have a single source of truth. If you have a multiplicity of places where you store a datum, then things break. If you skimmed through the above extra rules that ultimately result from this simple idea, then you can see that the implications are vast. And we’ve just begun. We can see how well this plays out by understanding what we’re doing when we’re building a data model.

A data model describes the relationships between things. And here’s an entire paragraph you might wish to skip! Because data scientists want to keep their jobs, and also partly out of convenience, they’ve built up an arcane terminology around this. So a ‘thing’ is referred to as an ‘entity’. When we have a grouping of entities, that’s called a ‘tuple’. Group n things and you have an n-tuple. Something on which other things depend—oops, an entity on which other entities have a dependent relationship—is a ‘key’. A key that uniquely identifies a whole row of data in a table is called a ‘primary key’. A key in a table that refers to the primary key of another table is a ‘foreign key’. And a diagram that describes how components of tables relate to components in other tables is an ‘entity-relationship diagram’.

Using these building blocks, we can build anything. Provided we do it right. And the right way has basically three normalisation rules.6 I’ll keep this simple, by referring to how we actually build tables. Soon we’ll see examples, based on these 3 design rules:

(1) No table has repeating columns.

(2) A column never depends on just part of a primary key.

(3) Every column depends only on the primary key—not on any other column.

We’ve found that to pretty much all intents and purposes, if you structure your data like this you get rid of all the anomalies that plagued Codd’s customers: insertion anomalies, update anomalies, and deletion anomalies.

In fact, if you do this really well—you structure your information layer right—then you will almost never need to change it. In a later post, I’ll explore this practically, and explain why e.g. Medicine is Doomed because We Built the Data Structures Wrong, but let’s simply flesh out (1)—(3) above, now. Together, they embody what we call third normal form.

First normal form

Imagine that you’re building (unwisely, in Excel) a representation of the staff present in an operating theatre. What categories of staff might you need? You’ll likely need anaesthetists, and surgeons, and operating room nurses, and—if we’re talking cardiothoracics—the option of a perfusionist who looks after the cardiopulmonary bypass circuitry, and several other people.

The temptation is to build your Excel table with rows (1 row per operation) and columns (1 column for the perfusionist, 1 column for the anaesthetic tech, 1 column for the primary surgeon, 1 column for the primary anaesthetist) … but then what about extra bodies? How many extra columns do we need for “anaesthetists present”? How many surgeons do we need to cater for?

This sort of ‘design problem’ can naively be sorted out by having lots of redundant columns, perhaps for as many people of that category as we might ever need. Such design is wrong—and not just wrong for the obvious reason that when an anaesthetist encounters an emergency and hits the red button, the total number of anaesthetists who respond will always be one more than the number of columns allocated in your spreadsheet!

The more fundamental problems relate to bad design, and its consequences. Let’s take just one practical example—in asking “Which anaesthetists were present?” you need to know precisely how many ‘anaesthetist’ columns there are. But some sparky adds a new column to cater for that extra +1 anaesthetist—and suddenly Codd’s Rule #9 is broken—all the functional software that interrogates the data needs to be rejigged. Oops!

Second normal form

Now at this point, I could digress for several paragraphs about composite primary keys, choosing among various options for building such keys, ‘natural’ primary keys, and so on. But we can generally finesse things (and make them easier for everyone) by simply building tables that have a single primary key. Then ‘second normal form’ becomes a non-issue. Mostly.

Third normal form

Third normal form, then. “3NF”. This is the biggie. We don’t want a data item within a row to depend on anything other than the primary key (PK) of that row. Consider our Excel “theatre staff” spreadsheet again. Let’s violate 3NF in two ways.

Let’s say we misguidedly decide to ‘conveniently’ store a contact number for each member of the theatre team in a new column in our Excel table. Not only is there massive duplication of data—waste—but it’s clear that the telephone number depends not on the row, but the person. 3NF is violated. And if the person has two telephones, we’re back to something that’s not even first normal form!

As this is Excel, so if you start typing someone’s name, it has a wonderful “auto-complete” function. It’s enormously tempting to insert the actual clinician’s name for each entry. It’s also easy to read. Win-win, right?

Not so fast. People change their names. The text “Artemus Jones” in a box is actually an attribute of the person! If we store the name, and it changes, we have a huge problem. All over, we have copies we need to update. The name here is sorely abused—not only does it depend on something other than the row PK, it is being abused as a proxy for that identifier. Broken!

Without labouring on, the preceding taste of madness should provide at least superficial justification for building things right. Bill Kent gave an even easier way of summarising this:

Under second and third normal forms, a non-key field must provide a fact about the key, the whole key, and nothing but the key [So help me Codd].

Everything joined up right! And that’s what Codd worked out in 1970–1971. It took some time to explain, some time to sink in, and then I believe too many people trained on Excel; and too many people didn’t really get 3NF. So some decided to return to pre-1970. More or less.

But, No, SQL

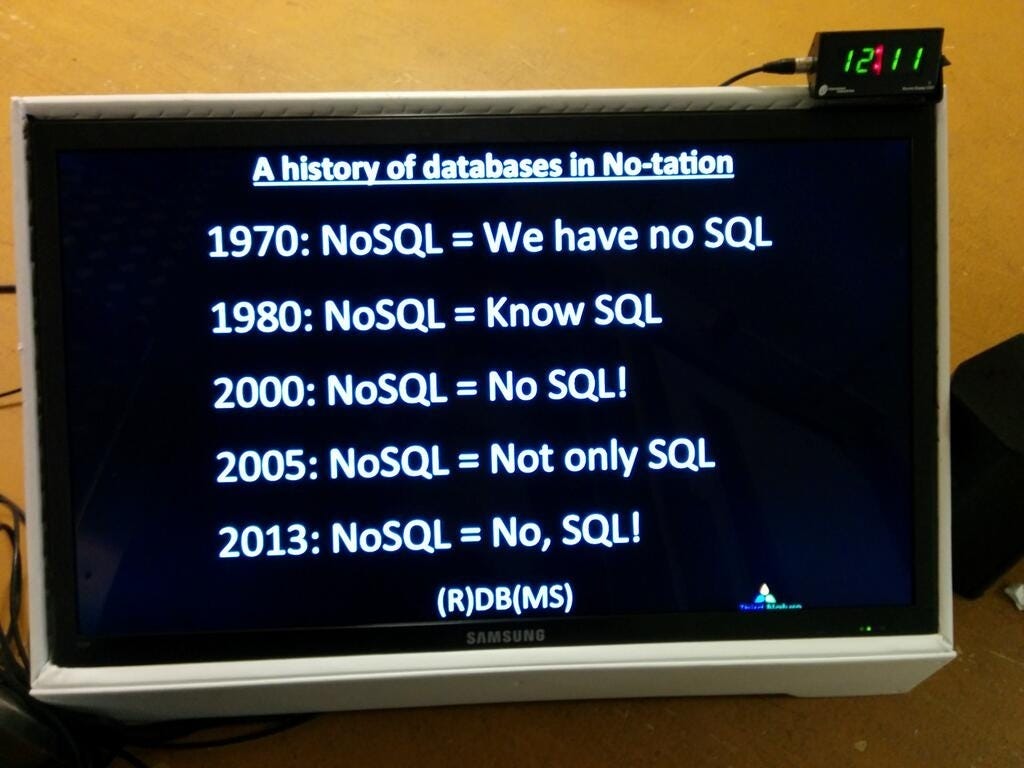

In about 2000, several bright sparkies (who perhaps sat at the back of the class when relational models were being discussed) decided that most of the above is bullsh-t, and when you’re dealing with ‘big data’, you need new, more relaxed rules, especially if you’re going to keep ahead of the opposition and really crunch data fast.

Today, I’m not going to go into the multiplicity of reasons why they are not just mostly wrong (which is OK) but almost categorically insane (which is less OK). The above image7 almost says it all—describing the progression of their philosophy over the years. Perhaps some other time we can explore the madness fully? Suffice it to say, for now, that nobody should even attempt “NoSQL-based design” unless they are fully conversant with 3NF—and have structured their first attempt using solid normalisation principles. So they “No” where they’re going wrong.

Normalisation is not about databases!

Which brings us to our conclusion. You see, I’ve been disingenuous above. I seem to have been speaking about making databases, and I’ve used some rather weak, database-aligned arguments to explain the basics.

But it’s not about SQL, or your RDBMS, or even about tables and columns and rows and data points. The idea behind ‘normalisation’ is about adequately describing reality in a joined up manner—and doing this in a way that allows us to use our model of reality to do stuff. And do stuff well.

We need to eliminate wasteful duplication by building models that contain the ‘right’ relationships.

When I said above that my tool is “used infrequently by most people quite because they just don’t get it”, I wasn’t talking about SQL or indeed RDBMs. They are used everywhere. I was talking about joining up your models of things properly because you understand normalisation. This is not done often or well. Even by database designers (beer and rugby may play a role).

If you’ve read my previous two posts, you may support the idea that although we cannot ever make ‘true’ models of reality, we can make useful models that we can consider provisionally ‘true’. We can also make certain somewhat arbitrary choices of ‘standards’ or ‘reference points’, and build on these, provided we do so wisely and have enough insight to go back repeatedly and check our work.

But we can do more. We can build joined-up models that are useful, and even do this in ways that are immunised against bad design. Sometimes we can reify these models carefully in the form of databases that allow us to store and interrogate data, all without the insertion, update and deletion anomalies that plagued Codd’s early customers.

Normalisation is a way of thinking that has massive practical consequences. That’s the tool.

In my next post, I’ll jump back in time to Cicero, Illinois on 16 May 1924, and then lurch forward to Japan in the 1950s, to see how—once we have the data properly organised—we can interpret them well, especially when those data are changing over time.

My 2c, Dr Jo.

Image purportedly from english.my-definitions.com, but when I clicked on the link to the hammer image in DuckDuckGo, it took me to an arbitrary French site that tried to sell me something quite different. That looks like a nice hickory handle, though.

Ironically, Excel was built by Microsoft for the Mac; the first Windows version only came out two years later, in 1987.

Arguably, for many purposes a tree-structured mind-set is worse. But that too is a topic for another day.

This specific UK instance was about using an outdated version of Excel, and not checking your data, and indeed not amalgamating data correctly—so many failings—but it makes a neat example, and is less arduous than, say, forensic analysis of how every other major institution has doubtless from time-to-time used spectacular incompetence in wielding Excel or indeed, even their RDBMS.

In my understanding, there were initially just 12 Codd rules, which also sounds nice, so when the 13th foundational rule was jacked on 2 years later, it was logically prepended at position zero. They are still “Codd’s 12 rules”, numbering 13.

This section on first, second and third normal form is fundamentally lies-to-children, because the theory is more complex. But it’s good enough to use, which is what counts, for me. It’s, well, mostly wrong, but useful.

The No-SQL image is from a Quora post I wrote 7 years ago, but I’m not sure where I found it then.

I almost didn’t read this loooong article and was simply going to leave a short piece of advice that goes something like this: write shorter.

But I have often read comments by idiots who have clearly not bothered to read the article or watch the video on which they are commenting, and thought “no, idiot, you are going to have to read the article first”.

And then I came across this bit:

“If at any point in the following exploration you get bored, you can skip right down to the final section—titled “Normalisation is not about databases”. And you’ll still get the most important point. But me, I like the scenic route. Shall we begin?”

By then I was hooked so I read the whole damn thing.

Even when I got to this bit: “And here’s an entire paragraph you might wish to skip!”, I skipped on through rather than over.

I read the weekly blogs of another scientist known for his logorrhoea and have often wondered why he does not offer a shorter more condensed version for those who do have the time to be bored. But, of course, we now have LLM that can do this reasonably effectively.

But you do miss the scenery:

“So you think you're a Romeo.

You're playing a part in a picture-show.

Well, take the long way home.

Take the long way home”

My father-in-law was a sort of modern-day polymath and his advice to me that I remember most is “forget speed reading” - and he read seven books at a time! - “take your time; think, absorb, and savour”.

Take the long way home.

And then I read this: “I’ll keep this simple” and “I’ve trimmed the rules to keep this short.” Oh, shit! But then this: “As an aside Greg Kremniz has an insanely good overview of these rules on Quora”. Oh, double shit!

Awww thank you Dr.Jo. How super kind of you to say and how flattering (and honoured I am) to be included “in print” Thoroughly made my day (they don’t let me out much, so…) and very happy to be able to give a little bit back when gaining so much from your writings.

Very much looking forward to your next insightful, intelligent, interesting, inspiring, intrinsically fascinating, funny as fuck and not wrong (despite your title) instalments.

Mat ✌🏻❤️🇬🇧

p.s The longer the better. Hee hee