Bad Players

Journals and sin (Part 5/8)

My medical department is pretty keen on “360 degree” reviews of how each of us is shaping up. These are comprehensive and anonymised. But a bit more than a decade ago I easily worked out which of my colleagues had written one of those reviews. It contained the telltale comment:

Although sometimes patient management is less than entirely adequate, and it is reasonable to comment on this, Dr Jo should be reminded that the term ‘workers’ is not an acceptable descriptive term in such circumstances.

I puzzled over this only momentarily. I knew that an older colleague Dr S sometimes took offence at some of my richer comments, and that the secretaries struggled with his handwriting. The actual word—one that none of the secretaries would imagine him writing—was ‘wankers’.

I felt bad. Dr S is of course right. I cut down on the ripe commentary. In fairness, I likely do mutter similar unflattering comments about myself when I stuff up. As we all do. Often the system sets us up to fail. Sometimes we’re just stupid. We need to recognise this. A favourite phrase of mine is the variously attributed:

Do not impute malice where incompetence will suffice.

The problem is that a small proportion of researchers, scientists and clinicians actually do seem to go far beyond incompetence. This shows up in various ways, but it’s often a combination of deficient insight, self-serving behaviour, lack of compassion, and one more thing ...

Musings on the nature of sin

We’ve just seen⌘ how some wannabe scientists will game the system. What I’m about to discuss goes a lot further. In the past I’ve remarked on the nature of sin⌘—treating people as things.1 I have no difficulty labelling repeated sins as ‘evil’, especially when the perpetrator lacks insight and remorse.

When a scientist is a bad player, bad things may not happen immediately. Sometimes the harm is deferred. But when billions are wasted and patients end up being harmed by omission or commission, I tend to mutter rather a lot.

I’ll defer most of my anger to my next post, when I lay into organised, industrial-strength deceit. Let’s start small. Often we start extremely small, with proteins. I’ve previously described western blots,⌘ more or less in passing. Now is the time to flesh them out.

Bad blots

A western blot is used to identify proteins. This is often at the core of valuable research. Fundamental research in turn may result in billions being spent in the pursuit of cures for important diseases. It would be a pity if all of this expenditure was based on the results of experiments with Photoshop, wouldn’t it?

We’re back with the science integrity sleuths we met recently.⌘ Here’s another wise sleuth: Matthew Schrag, a neuroscientist at Vanderbilt. In August 2021, he was asked to assess published images that were claimed to support a dodgy dementia drug called simufilam.2 This work however drew him in an unexpected direction. There were concerns about other Alzheimer’s research.

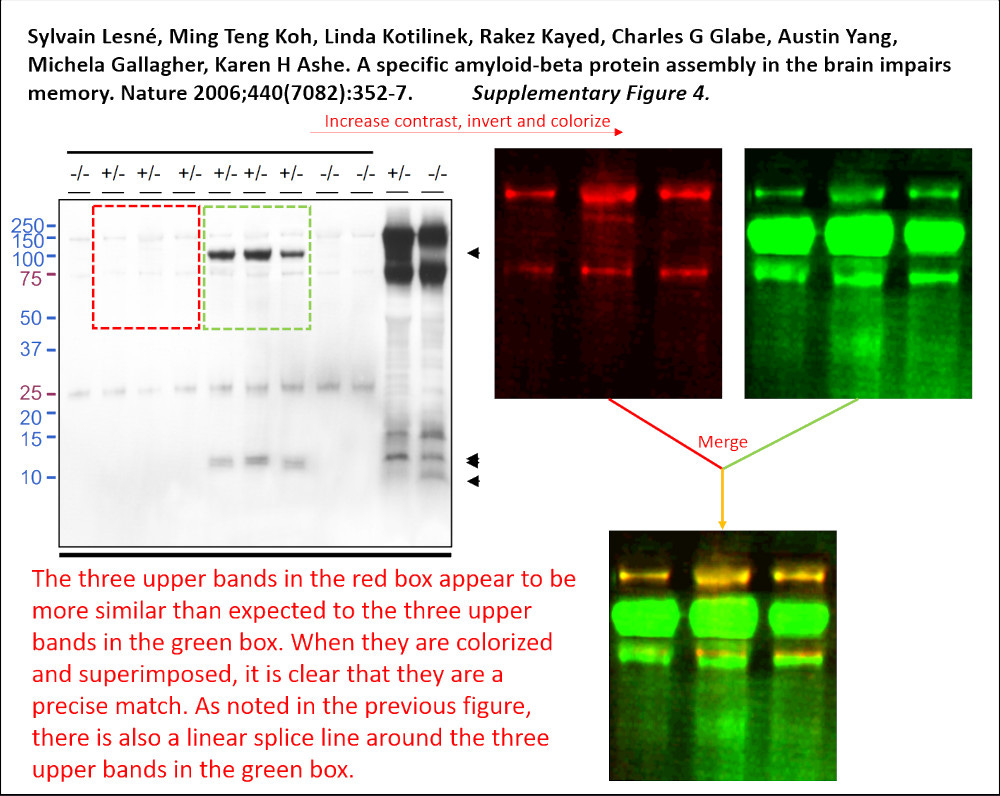

Schrag dug into the work of neuroscientist Sylvain Lesné. This rockstar researcher had published a very influential paper in Nature in 2006. Schrag’s review cast huge doubt on hundreds of images, all previously thought to strongly support the idea that one protein causes Alzheimer’s disease, specifically a variant of amyloid-β precursor protein that Lesné called ‘Aβ*56’. The paper, “A specific amyloid-β protein assembly in the brain impairs memory”, has now been withdrawn, after confirmatory analyses by Elisabeth Bik and Jana Christopher. Lesné resigned from the University of Minnesota on 1 March, 2025.

Astonishingly, Springer still wants to charge you €39.95 to read this crap! Don’t worry, though. Its abundance of defects is on display at PubPeer, including the example at the start of this section. It seems clear that Lesné used Photoshop to manufacture something that wasn’t there—and that this is reflected in much of his work. Work that changed the course of Alzheimer’s research. Dimensions tells us that this article has been cited 2518 times.3 In 2021 alone, the National Institutes of Health forked out over a quarter of a billion US dollars for studies labelled “amyloid, oligomer, Alzheimer’s”.

When the news broke, Alzheimer’s researchers who hitched their wagon to the beta amyloid star were quick to point out that Aβ*56 is merely one (possibly mythical) form of amyloid. But data scientist Chris Said has estimated that if this deceit delayed a successful treatment for Alzheimer’s by just one year, that represents the loss of 36 million quality-adjusted life years, more than the US loss due to World War II. We’ll get back to the ‘amyloid hypothesis’ in my next post. This is just one egregious example of apparent medical evil. If you look, they are legion.

The stem of evil

The Lesné fiasco is not isolated. Leonid Schneider describes a web of related research from a variety of suspect scientists. There are unexpected tie-ins to anti-ageing researchers we’ve previously encountered, notably Tony Wyss-Coray!⌘ As I’ve said before, they all know one another.⌘

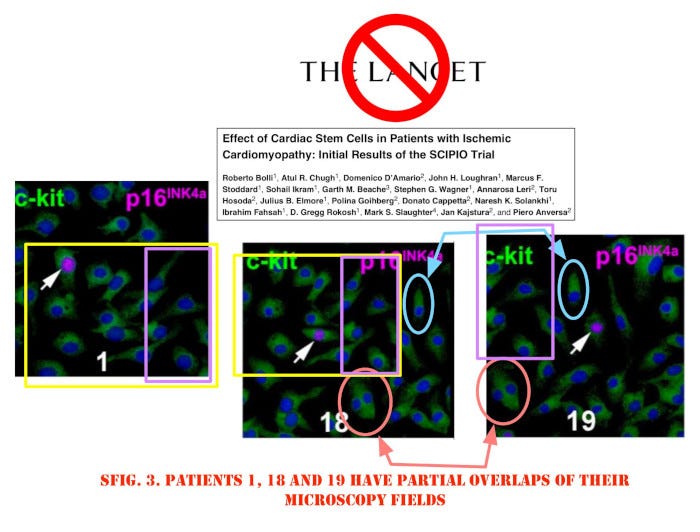

Another rich source of fraudulent research is stem cells. We’ve recently encountered apparent infiltration of the British Medical Journal by papermillers apparently hell-bent on selling stem cells for heart muscle ‘rescue’ after heart attacks, but this is just the end of a long and sordid adventure. In the early 2000’s, Piero Anversa garnered millions of dollars in funding following his claims that adult stem cells could fix damaged hearts. Semantic Scholar still gives him an h-index of 119, with over 60,000 citations.

A six-year investigation that started in 2011 found that Anversa’s data were faked. Harvard asked for retraction of 31 papers, some in top journals like Lancet and New England Journal of Medicine. The cut-and-pasted images shown above are from the Lancet article. Partners Healthcare and Brigham & Women’s Hospital paid out $10 million, but this is a drop in the bucket. The NIH has spent over half a billion on such research since 2001.4

No discussion of ‘stem cell evil’ would be complete without mentioning trachea transplants. But the story and conviction of rogue surgeon Paolo Macchiarini is now so well known—together with the deaths of seven of his eight trachea transplant patients—that I’m not going to repeat it here. Instead, read an excerpt from Carl Elliot’s “The Occasional Human Sacrifice: Medical Experimentation and the Price of Saying No” at Retraction Watch. This emphasises how whistleblowers were persecuted by the top brass at the Karolinska Institute. What may be less well known is how much of Maccharini’s research work was dodgy. This example from PubPeer is typical, showing all sorts of image manipulation. It seems he effortlessly translated his sins from bench to bedside.

“No patients were harmed”

One of my specific interests is pain management. Cutting into people with sharp knives often causes a lot of pain (D’Oh!) so I’m particularly keen to address this. I devote a week every month to the management of perioperative pain, and have done so for decades.

For years, I wondered about the facility with which Scott S Reuben produced glib, positive papers on ‘multimodal’ perioperative pain relief. He practically invented ‘multimodal analgesia’. Pharmaceutical companies loved him, because he seemed to rapidly and effortlessly produce a new study in support of a particular product.

I no longer wonder. I know. Reuben was Professor of Anesthesiology and Pain Medicine at Tufts. In 2008, a fortunate audit revealed that he’d not been given approval for two studies he wanted to present. Further enquiry was a bit embarrassing for Baystate Medical Center in Springfield, Massachusetts, as he admitted that none of the multitude of studies he’d published actually involved treating patients’ pain. They were all made up.

You might briefly argue that, well, “No patients were then harmed”—only to realise that people used his studies as evidence to support giving harmful drugs to millions of patients. For example, rofecoxib (Celebrex, Merck) likely killed several tens of thousands of people before it was withdrawn, and Scott inventively contributed to the supportive literature.

In fact, the discipline of anaesthesia seems to be particularly rich in frauds. Some of the most spectacular individual transgressions live here. We have Joachim Boldt who pretty much wrote the literature on volume resuscitation using hydroxyethyl starch. All made up. Over 200 retracted papers.5 There’s even an ignominious leader board at Retraction Watch, with Boldt at the top, and another anaesthetist Yoshitaka Fujii yapping at his heels.

The life of Brian

Just to be clear, right from the start. This section has nothing to do with that superb movie Life of Brian. Any resemblances are coincidental.

Brian Wansink preached about food. Born into a humble, blue-collar family,6 he gained a BS in business administration from Wayne State College, followed by a PhD in marketing. The latter helped a lot as he worked his way up to Cornell, where he focused on ‘food choices’. In 2007, he was even appointed executive director of the Centre for Nutrition Policy and Promotion at the US Department of Agriculture.

We’ve previously discovered⌘ that most non-communicable diseases like type 2 diabetes follow the flow of commodities into communities, and that individual humans have little agency when it comes to fixing this. The Gospel according to Brian however seems to be that people can make a difference simply by changing their behaviour.

This revelation was followed by a flood of corrections and retractions. His theology of food was based on bad numbers. Brian seemed extraordinarily awful at counting loaves and fishes. He would take a negative study and perform miracles, resurrecting it in the form of five papers. For Brian, the tragedy was that he betrayed himself. In his now-infamous Judas post, he revealed his personal pathway to salvation:

When she arrived, I gave her a data set of a self-funded, failed study which had null results (it was a one month study in an all-you-can-eat Italian restaurant buffet where we had charged some people ½ as much as others). I said, “This cost us a lot of time and our own money to collect. There’s got to be something here we can salvage because it’s a cool (rich & unique) data set.” I had three ideas for potential Plan B, C, & D directions (since Plan A had failed). I told her what the analyses should be and what the tables should look like.

Wansink would get his students to troll through the data until they found ‘something of significance.’ He was p-hacking!⌘ All the time. It seems Brian wasn’t all that bright. Another way to read this is that he’d never been shown how to do research properly, and had simply drifted upwards, like a party balloon filled with gas. Until he exploded.

Fail

And this is where my criticism falters. Above, we’ve seen some pretty bad stuff. It’s good that the flawed science was found out. The intrepid sleuths who hunted down the fraudsters deserve praise. But there’s something missing.

Especially when we look at an untethered balloon like Brian. As I see it, he’s somewhat pathetic. ‘Bad players’ can be evil, and they can simply be bad at their job. If I were to use my previous, unacceptable metaphor from above, I might classify him as a bit of a wanker. But this rather misses the point. Surely, individuals need to take responsibility for their evil actions. Surely, we need to be vigilant for those same transgressions. But if we are to fix things in the long term, then with equal certainty we need to step back and ask “Why?”

As I said right at the start, often the system sets us up to fail. This runs deep. Even the worst of the above researchers might have had a different outcome, had they been better taught. Or better caught. At the very least, might the damage have been limited and the next dodgy practitioner forestalled?

What happens instead? Retractions are tardy. The more prestigious the institution, the more they have to lose, and the more they may paper over the problem. Even if this allows harm to continue. Even if this means completely failing to fix the underlying processes that facilitated evil.

And when we get to drug companies—my next exploration—this problem is amplified.

My 2c, Dr Jo.

⌘ This ‘of interest’ symbol flags links where I discuss the topic further in one of my Substack posts.

The previous post in this series was Hirsch hacking.⌘

The ‘Weatherwax’ definition of sin.

From the suspect pharma firm, Cassava Sciences. Simufilam was discontinued in November 2024. It’s rubbish.

Google Scholar says ‘3608’ and gives him an h-index of 38.

There was also the dodgy heart stem cell therapy punted by Bod-Eckehard Strauer.

Right from the start, many of us wondered whether grinding up corn and potatoes and infusing the ultimate products was a great idea.

Not, as far as we know, in a manger.

Evil is magnified by citation.

Certainly, a less difficult method of vetting a publication that you wish to cite is by noting the number of citations it already has, in web of science. Surely, someone else has already done the difficult work of vetting the publication, especially if it comes from a prestigious institution and a publisher with a good reputation.

And papers have their own bibliographies, with their own citations, reminding of the fleas that have their own fleas to bite'em.

I personally like papers that, at least, have their own datasets. I can at least see if I can do the same statistical analysis with the data and come up with statistical results.

Another thing I can do with actual datasets is I can easily run queries on the data that can reveal 'artifacts' (unlikely values) such as exactly identical values, or other things that are simply too neat.

Often, we don't start debunking unless our tingly spider sense (or just sense of smell) tells us that something doesn't add up, or even worse, that something adds up too neatly (the sewage has perfume to disguise the odor.)

What makes me more worried about the faking by Photoshop is that it is detectable by peeping pixels, yes, but it would have been easy to fake the data in a way that it wouldn't be.

For example, spike a western blot so it shows a real extra line in about the right place (or maybe even cut it from a different image not shown), present two different microscopy images from the same sample pretending to be different patients... A bit harder, but still much easier than actually doing the work.

The ones that get caught aren't just evil, they are also lazy and stupid. And I wonder which ones manage to pass their cheating under the radar.