See that dog run

This is not a post about greyhound racing

NB. Don’t forget to vote at the end!

In my country—New Zealand—we’re about to ban greyhound racing. Too many dogs have died, and perhaps forty times more get injured. This reflects a worldwide trend.

Obviously the central driver for greyhound racing has been financial: in the hope of rewards,1 people bet substantial amounts of money on the ‘sport’, as with horse-racing. The greyhound racing industry that has built up around this practice (Greyhound Racing New Zealand, GRNZ) naturally claims that they ‘care’, and have enthusiastically sung the Wotabout Song: that other people also harm animals, so there. But we’ve had enough, and we’re winding it down. Too much badness.

Another way of looking at the whole thing is simply to watch a greyhound running. Isn’t it a wonderful picture? Graceful. Elegant. Sufficient justification for the existence of the breed, perhaps, if not for existence of the ‘sport.’

They are beautiful animals.

Origins

Introduced in 1851, the European hare spread inexorably throughout New Zealand. Greyhounds were brought in to control the hares; the first greyhound racing club was formed in Southland in 1876. Racing took on a life of its own, and in 1948 a ‘tin hare’ was introduced in Christchurch, with tote betting starting in 1978. Before the tin hare, a live hare was used, with points awarded for the chase and the kill.2

It’s interesting to contrast what GRNZ says and independent reports like the Hansen Report and the Robertson Review, which notes:

Those tasked with monitoring the industry, such as members of the GRNZ Health and Welfare Committee, or NAWAC, have advised it is incredibly difficult to obtain even the simplest information …

I have been advised by MPI officials that veterinary approval does not ensure euthanasia is performed for good reason, and a veterinarian has no power to prevent an owner/trainer from requesting euthanasia for ‘unreasonable’ circumstances (e.g. barking or other inconvenient behaviours, not fast enough, excess to requirements, minor injury) …

Tracking of dogs post-racing career is limited, and there is little available information regarding dogs that never make it to the track …

GRNZ has made its job harder by unnecessarily obfuscating information and pushing back against those with an interest.

Beautiful dogs

The suffering of greyhounds is very real. The hares don’t have fun either. But heck, they look beautiful while running, don’t they? In watching the dogs running, we can almost put aside the injuries and deaths; the vision of a terrified hare being crunched. Almost. Until we come to our senses.

Forgive me if I take this as a metaphor, and run with it. Last week, two smart people enthused to me about two deployments of artificial intelligence (AI). Specifically about agentic AI. Beautiful things that ‘just run’. Perhaps we need some more detail, though?

“They built what I wanted and it works”

The first is a correspondent on Substack. In response to a recent post⌘ of mine, he described how he’s got agentic AI to just run and do remarkable things. Here’s a quote:

The tricky part is making it possible for the LLM [large language model] to recognize that it has failed. That is definitely an art! The trick is to define the requirements very carefully, almost like a legal contract.3

I also do what I call “overtesting” where I will require the AI to test its own work in a way that is ludicrous overkill compared to what I would ask of a human developer. For example for a UI task, I may demand whole libraries of screenshots, verified correct via command-line OCR tools and image comparison tools, Playwright-based fuzzing, video captures and video review sub-agents...

But then I come back and 4x agents have been running overnight and they built what I wanted and it works. They went in the wrong direction several times and my /tmp is a pile of trash :) But the final version is correct …

… I think it again comes down to carefully articulated requirements and the willingness to blow compute on author/critic patterns, as well as simulate-before-execution approaches.

Impressive.

“She taps approve. One tap”

The second is a very smart medical colleague who is very much taken by AI in Medicine. He showed me this dog, running …

Nope. You don’t need to visit the link, if you are allergic to X. The author sells the dream. A primary care provider sleeps while her ‘agentic EHR’ runs all night. All she need do in the morning is check that the headlines make sense, and approve. He gives one example.

It just runs, right?

Not so fast. First, let’s Science it!⌘ After all, Science is what we have. We ask:

What are the actual problems?

What is our explanatory model? (We refine/reject/refashion our models until they work and meet our explanatory needs)

What does this predict, and how can we change things? Does agentic AI ‘fit’?

Let’s start with the software. It just runs, right? But what are the real problems with software? Here’s my list. In order.

Security

Suitability & Safety

Reliability

Maintainability

Efficiency

We can then ask questions like “Can AI help?” We can also ask “What are the catches?” and “Will any dogs be harmed?”

The appearance of adequacy

For me, the obvious problem with the first agentic example is belief in the appearance of adequacy. Especially with LLMs, we cannot trust appearances. They cannot even be relied upon to follow the rules of chess!⌘ Our agentic ‘coder’ has no meta-cognition, so it can’t be trusted to ‘get’ the purpose and context of the task. One of the main things agents have added is an extra layer of obfuscation, making it more difficult to evaluate them. Inverse scaling—where more is worse—can occur with agentic AI too.4

We know that in Science there is no proof: we rely on continual re-examination of models of reality. In contrast an LLM is a crystallised model of language.5 Iteratively applying the LLM is different from refining a scientific model.

If you’re not a programmer, you may wish to skip the next two paragraphs.

There is a counter-argument that runs like this: “But he is getting the LLM to run regression tests, and these will show proof of adequacy”. In response, I’d observe that for a non-trivial project, there’s an almost infinite number of regression tests that we can design. Being able to build the right ones depends on a deep understanding of the potential problems, key edge cases and context.6

Still not convinced? Why not try a very simple task: write code in the language of your choice (e.g. Python, Java, C++) that compares two floating point numbers and returns True or False depending on whether they’re close enough or not, for a supplied small value (epsilon). Once you’ve done this to your satisfaction, ask an LLM to do the same. (It may well do far better than you). Then ask it for a suitable set of regression tests. Now read this article and re-think those tests. Finally, ask yourself whether your routine is appropriate for checking the results you obtain in testing a set of linear algebra functions. What problems might still arise?

The Agentic EHR

The ‘agentic EHR’ has similar problems. Even worse. That post on X is an advertisement. It’s not backed up by prospective research, for example a randomised trial or a stepped-wedge trial. The doctor who taps ‘Approve’ has every reason to believe that the agentic LLM fumbled some decisions—it’s a model of the language of Medicine, not a model of reality. So far, AI has tended to muck up complex medical problems, so we need solid evidence that ‘improvements’ won’t do this too.

But we don’t need to re-litigate this. I’ve just written a ten-part series of posts on Substack⌘ that explores the fixes Medicine needs, and shows why AI is not the answer.⌘ Especially LLMs, which in addition to not addressing the actual problems with Medicine and its computerisation, add the liabilities of ‘hallucination’, sycophancy, naive acceptance of what they ingest, and that complete lack of an internal model of the real world.

But wait, there’s more …

Dead dogs

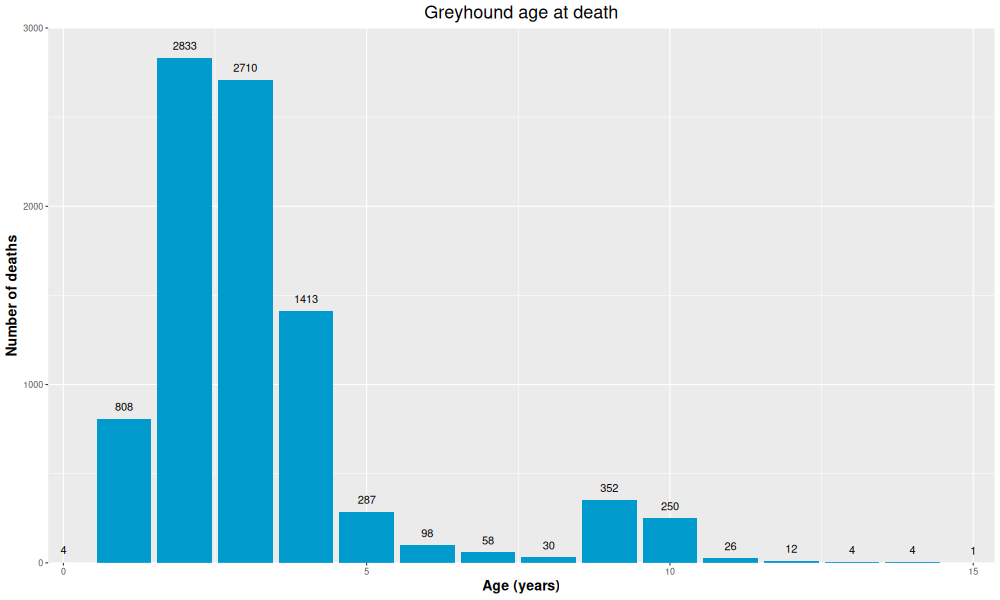

Look at the above graph. What can you deduce?

In these NZ data, the average greyhound lives about 3 years, and almost all are dead by 5. Then there’s that strange secondary peak at 9 years. If you look into the survival of all pet dogs, graphs normally start dipping at 10 years and most are dead by 15.7 Larger dogs tend to have somewhat shorter lifespans, but the above graph is shockingly curtailed.8 I’d suggest that the secondary peak on the right is rescued dogs, treated right.

I’m now going to be provocative. I’m going to look at a few dead AI dogs. We need to complete our metaphor.

First, financial costs. AI isn’t necessarily cheap. One Figma AI user managed to rack up a spend of $70,000 in Claude tokens on a $20 per month contract. Liu Xiaopai regularly managed to burn through $50K on a $200/month account.9 You might say “Good for them!” but you also need to ask “Who paid?”

And then we have deliberate ‘tokenmaxxing’: employees trying to show off how busy they are by maxing out their AI spend!

Let’s spell it out. In our first example, our senior dev set his agents to work, and 4x agents ran overnight, working on “whole libraries of screenshots, verified correct via command-line OCR tools and image comparison tools, Playwright-based fuzzing, video captures and video review sub-agents.” How much did this cost?

Let’s repeat our second scenario over every patient record in the US. Every night, the AI agent explores the active records, extensively cross-correlating. What will this cost, and who will pay just the energy bill?10

Two trillion dollars is being invested in AI this year. The billionaires driving this will want huge profits. Who will pay? I’d suggest that the answer is “You will”.

… and suffering

But financial costs are the lesser part of the problem! Agents won’t be infallible. There’s the matter of finding and fixing the inevitable, residual bugs when they surface. To the best of my knowledge, nobody so far has shown that AI-generated code is more reliable overall; rather, the converse has been shown.

Indirect costs are far worse. As I write, young, smart people are struggling to find work, because mega-corporations are all caught up in AI, seeing the opportunity to “cut costs” everywhere else. While their remaining employees tokenmaxx.

We are also failing to train the software developers we will need, even if its only to become senior devs who can guide AI agents wisely and fix their worst excesses. The speculation that they too will be replaced by AI doing proper metacognition is just that—speculation. There is at present nothing of substance to endorse the widespread current belief that this will happen in the next decade or so. And if they do, the societal cost will likely be immense.⌘

Worse still, we are empowering autocratic billionaires, and weakening pretty much everyone else.

But heck, See that dog run!

Where would you like to run?

Now that I’ve completed my series on the EHR,⌘ I’m unsure what to cover next. Perhaps you can help me. Something I’ve been meaning to dig into is evolution, natural selection and the origins of life, especially recent advances in the field. But I’m torn. I’d also like to do a series on fixing those five chronic software problems I listed above. That’s quite a contrast. So I have a little survey for you …

Thanks for voting. The overwhelming choice is ‘Natural Selection’, so that’s where I’ve gone ⇶

My 2c, Dr Jo.

⌘ This symbol is used to indicate posts where I’ve discussed the flagged topic in more detail.

Or because they’re addicted to gambling.

Using live hares was banned in New Zealand in 1954.

A slightly cynical footnote: I call this ‘programming’ :)

Looking at this in more detail, I was surprised to find that there’s already lots of evidence that agentic reasoning is not all its cracked up to be. Using General AgentBench, Li et al recently found that transitioning to general-purpose agents resulted in substantial performance degradation; there are also large concerns about reward tampering and similar behaviours. Agents can become misaligned. Then there’s security.

‘Crystallised’ in the sense that the actual model is committed. It effectively cannot be refined or updated. At least, not without enormous expense.⌘ So instead, the shroud of code around it is tweaked, as an inadequate compensatory mechanism.

As I see it, the regression tests should emerge as you code, guided by the insights, testing and failures you encounter while coding.

Working dogs tend to retire at about 10 years of age.

Either because most dogs died young, or because (in some ways worse) their data are unreliable, suggesting lack of concern as to whether older dogs are even alive.

Until throttling was put in place. Conversely, to save tokens, some users are interrogating LLMs in languages other than English.

Added after publication: Ed Zitron has a scathing post on the cost of AI: The Subprime AI Crisis is Here. It’s a fine (and extremely long) rant.

1. Anyone who hasn't realised by now that LLMs are impostor pilots in the cockpit throwing all the jargon with no understanding with a mixture of lucky guesses and sheer buffoonery haven't used them for anything but trivial informative prompts. The risks are huge everywhere. There are operational risks, distraction risks, focus risks that need to be addressed. I believe that all FAA or FDA style airworthiness certificate would never be issued if there were an FAIA like equivalent. Imagine an autopilot replaced by an LLM style AI. How many crashes would happen? And how would pilots spend their time trying to control the autopilot? At the very least, I think all LLM or agentic software should carry a heath/risk warning..

2. That EHR article on X is unreadable because of the use of unexplained acronyms. If I said SLV to a non derivatives quant in finance, or SU(3)xSU(2)xU(1) to a non quantum field theorist, without explanation I'm either guilty of carelessness or, worse, attempts to bamboozle. Never use acronyms unless confident the audience understands them. I know it's SNAFU but the feeling of BOHICA is unbearable.

3. I'd be interested in your take on two different topics. I will mention one. That's the Denis Noble & Michael Kevin on Vs Dawkins etc Al on the other side regarding not just evolution, but the roles of genes. I must own up and state I find Dawkins incapable of abstract thought so it might be useful to have a perspective from someone with an appreciation of more abstract thinking.

As near as I can tell LLM’s have long term financial problems. Current pricing is well below what is needed to pay for operating costs: the energy needed to fully process available external data regularly to remain up to date; the energy needed to reprocess the processed data in response to inquiries; the cost of interest on the loans needed to acquire the needed facilities: the computational equipment, and the buildings needed for them and office space; and costs for support personnel. Sometime it will be necessary to increase pricing for use of their models and that will affect the cost/benefit ratio for the replacement of personnel costs with the use of those models. That should decrease the use of the models. I have no obvious means of estimating price-demand curve. Maybe an LLM could address that question?