Deus ex Machina

Will AI Save Medicine? (Part 9 of ‘Fixing the EHR’)

A great big thank you to everyone who voted in my last post.⌘ I was impressed on two counts. First, pretty much everyone preferred Picasso’s scribble over the machine drawing.1 Second, there was a strong sentiment that modern ‘AI’ won’t contribute much to fixing Medicine.

In the past few posts, we’ve seen what Medicine needs to fix it: joined-up thinking, and processes that not only work better, but that can be continually improved.2 In fact, most of this comes down to the very boring basics: listening to people,⌘ understanding Deming,⌘ building the right information structures,⌘ and understanding how Good Medicine depends on causal models⌘ that are continually improved—good medical science. That and spending money on Medicine rather than on War.3 Can ‘AI’ help?

AI medical scribes

Let’s start on a positive note. LLMs are showing some promise in medical transcription. Large studies are starting to emerge. In a preprint on medRxiv, Enni Sanmark and colleagues from Finland evaluated the outcomes of 375,000 AI-generated notes. They found the time spent decreased from six and a third minutes to four and three quarters of a minute per note, and subjectively, clinicians reported improvements. Paul Lukac et al. from UCLA did a randomised trial with 3 parallel groups, involving 238 outpatient physicians and 23,653 visits. Interactions consisted of ‘usual care’, Microsoft DAX Copilot or Nabla, and similar to the Finns, they looked at physicians’ self assessment. The ‘time in note’ measurements of a bit over 4 minutes decreased respectively by 18s , 23 s and 41 s, and the physicians felt happier. In Madison, Wisconsin, Majid Afshar and others framed their study somewhat differently as “A Pragmatic Randomized Controlled Trial of Ambient Artificial Intelligence to Improve Health Practitioner Well-Being”. They did a 24-week, stepped-wedge roll out of ambient AI, looking at professional fulfilment & work exhaustion, as well as ‘interpersonal disengagement’ across 71,487 notes. According to them, exhaustion decreased by nearly half a point on a five-point scale.

There are some study limitations. In the Finnish study, there was no assessment of note quality. The similar UCLA study, in which 91/331 providers were simply excluded, did report ‘occasional’ inaccuracies and biases.4 The Madison study measured quality in terms of ‘diagnostic billing codes’, which improved, and documentation quality, which was unchanged.

The thing that struck me though, is that in most studies the motivation for spending money on these tools is generally about “saving time”, and thereby justifying the expense, with an occasional nod to physician burn-out.

There are other issues. In a small, recent US study, reviewers preferred AI-generated to physician-authored notes despite similar quality. (Why should this be?) Notably, they report ‘hallucinations’ (confabulation⌘) in 31% of AI notes, but also in 20% of clinician notes! Confabulation has been encountered commonly in other studies. There are bigger problems though …

AI won’t do the job

I see a little silhouetto of a man

Scaramouche, Scaramouche, will you do the Fandango?

Thunderbolt and lightning very very frightening me (Queen)

Gartner puts the 2026 AI spend at over US$ 2 trillion, mostly pursuing ‘artificial general intelligence’ using Large Language Models (LLMs) . I’ve previously pointed out that this is a ginormous bubble,⌘ but many experts will still disagree. People are flinging money where their mouth is, too.5

Trouble is, it’s not just that this investment won’t succeed. It can’t succeed. Let’s briefly review why.

The fundamental ‘success’ of LLMs is based on illusion. It’s that simple. Smart people have confused the appearance of thinking with actual thinking.6 Proper, joined-up thinking has several important characteristics: we create an internal model of the world, with internal ‘arrows’ that represent causal effects. This model matches reality in some way: if there are looming, dark clouds and there’s rain, we think that the clouds caused the rain, and not that the rain caused the clouds. If there’s thunder and lightning, we work out that the lightning causes the thunder, and not the other way around.

Our models and our thinking can become even more abstract and useful. We can measure and model what’s happening in the atmosphere, and predict the likelihood of rain. We can even think in terms of counterfactuals “What would happen if that low deepened?”

An important aspect—the important aspect—of our models is that they are adaptable. This is important because all models are wrong (but some are useful).⌘ Models that work don’t just reflect reality, they predict reality better than chance. Not perfectly, but usefully. And as we improve, so we update or even replace our models.

But can’t LLMs do this?

No. Not even close. The first thing that most smart people don’t get about LLMs is that they are frozen in time.⌘ Millions or even billions of dollars are put into crystallising large amounts of distilled language into the giant, weighted matrix that defines an LLM. It is then frozen.

All subsequent refinements are made in the shell of code that surrounds the model, not to the model itself.7 This is because the internal organisation of the model is inscrutable, so changing even a single fact runs the risk of unpredictable side effects—catastrophic forgetting. Therefore, over time, the shroud of code will become more and more dissociated from the inner model.8

“Gotcha! You said ‘inner model’!”

As I’ve already said, the LLM has an inscrutable inner model. This is however not a model of reality. It is a model of language. That’s why LLMs are so good at translation, and so good at giving the semblance of coherent thought. They are not trained on reality, but on what people have written about reality. They predict not how ‘reality will behave’ but the next word in the sentence. Clinicians are taken in, like everyone else. The generated text is so convincing.

Because we automatically assume that someone who is speaking has an internal model of reality that they are referring to, we take their pronouncements as reflective of that sort of inner model. An LLM may have an internal, consistent ‘manifold’ that underlies it, but this is a manifold that represents what people have written down. Not reality.

This is the source of a mystifying amount of confusion. If you ask a recent LLM about, say, causality or counterfactuals, it will rhapsodise like a college professor. Try this now! “Tell me about Judea Pearl’s 3 levels.”

We are then astonished (We shouldn’t be!) when our model stuffs up something really basic. I tried one of my perennial favourites⌘ on Claude today:

Me: Can you think of a mammal that swims in the ocean and wears trousers?

“No mammal naturally wears trousers — that’s a human concept of clothing, not something found in nature! … Is there a riddle or joke you’re building up to? 😄”.

It’s still stuck on this as a joke. Why? Because the overwhelming weight of its training ‘tells it’ that this is a humorous riddle. That’s how the language works. Its internal manifold also distinguishes between ‘humans’ and ‘mammals’ in an artificial way. The LLM doesn’t bridge its internal gap until you force it to. It lacks an internal model of “humans as mammals”.

Me: Please reconsider your first statement …

You’re right, I apologize — humans are mammals, swim in the ocean, and wear trousers! 😄

The language has been corrected. The model remains the same. Smiley face.

Medicine and AI

‘AI’ is of course bigger than LLMs. We know that in very specific domains—spotting tumours on mammograms, diagnosing conditions based on photographs of the retina, and so on, AI models show great promise, and some can even function better than people.

This is quite different from ‘fixing Medicine’, which we know is pretty broken. Medicine is wasteful, inefficient, expensive and often wrong. Rather often, people who enter the Palace of Iatrogenesis⌘ leave damaged. We know why. We’ve already explored a model for fixing the information structures.⌘ Can LLMs help? Many people seem to think so. Let’s ask an LLM :)

Me: Can LLMs help to ‘fix’ Medicine? If so, how?

Claude Sonnet 4.6 drew me a pretty diagram,9 and in summary listed these domains:

Reducing the burden of documentation

Democratising clinical reasoning

Health literacy and access

Research synthesis

In its diagram, it also added “drug discovery”, and “medical education” that includes adaptive tutoring and case simulations.

The first problem

All of the above look like reasonable answers. And there’s your first problem. I have no guarantee that if I put the same question into Claude again, I’ll get the same answer! There is also no guarantee that the diagram will match up with the text. Claude has no internal model of the problems in Medicine and potential solutions: instead it has a model of the associated ‘linguistic’ structures, with a random component.

The reason why the answer is plausible is because it somewhat matches our internal model of what the problems is. It’s not a bad answer. In a similar way, pretty much everything Claude says about Medicine will match what pretty much every reader has read about medicine. Of course it will seem sensible.

We know that the problems with medicine are deeper. They concern broken models, defective processes, excessive complexity and general disarray. Waste is conspicuous everywhere, resulting from these defects. But the LLM has no model of this, and the preponderant discussion is not about these defects, to which the LLM has literally nothing to contribute.

To be fair

The LLM also contributed some negatives, which reflects the literature on the topic. Claude again:

It’s worth being clear-eyed here. LLMs hallucinate, and in medicine that carries real stakes. They also encode the biases in their training data — historically, medical research has underrepresented women, elderly patients, and non-white populations, and an LLM trained on that literature will carry those gaps forward. And there’s a real risk that an AI that sounds confident erodes the clinician’s own reasoning rather than augmenting it.

We can modulate the bot’s output. Try the original query in the LLM of your choice, and follow it up with this:

Me: Isn’t the deeper problem in Medicine one of archaic, defective and poorly joined-up processes that together lead to waste and harm?

Then only do I get an apparently insightful response: “Yes — and this is the more honest diagnosis. LLMs are, at best, a layer on top of a system that has profound structural pathologies. The technology question is almost secondary.” Claude went on quite a bit about the importance of re-design, and how institutions and policymakers are reluctant to do just this.

Sycophant!

Cruella De Vil: What kind of sycophant are you??

Frederick: Uh, what kind of sycophant would you like me to be? (101 Dalmatians)

Here’s where our tendencies and those of the LLM intersect. We humans like praise, and often do science badly precisely because we are reluctant to welcome the criticism we so desperately need. LLMs have generally been tuned towards sycophancy, because that makes them more popular and successful.

This goes particularly badly in Medicine. Doctors like sycophancy; what we need is more honesty, and criticism. But we prefer the LLM-generated text.

“The burden of documentation”

Is documentation a burden? If so, why? I’d suggest that if asked, pretty much every clinician will agree that they are ‘burdened by documentation’. I would further like to suggest that this is (a) mostly a wrong perspective, and (b) fixable without the assistance of an LLM.

There are multiple reasons for the demands placed by documentation. In some countries (especially the USA) the documentation is onerous because management demands it—a consequence of intense litigation, and draconian billing systems.

In a properly organised medical system, documentation should be precisely what we need to do our job best. In other words, medical records that guide and teach.⌘ We need to reflect on and learn from what we ourselves write. This doesn’t seem to happen when the LLM is doing the writing.10

If management wants billable items, then they must build things so that these emerge from good documentation, without an extra burden on the clinician. It is however customary (and cheaper) to dump on the doctor.

If lawyers want better documentation of precise interactions and what was said, then the technology exists. Police can wear body cams; clinicians could similarly stream their entire patient interaction into a data store. They could then use their time on just what is needed. Good, reflective documentation. We know this won’t happen, for various reasons, but let’s imagine we got this right.

Documentation would then only be a burden if there were other remediable defects: poorly taught ‘documenters’, inadequate information systems, or not enough time. Each of these can reasonably be fixed, given insight, skill and money.

What do we get instead? Half-arsed attempts to justify LLM use because ‘throughput’ can be improved, with the occasional nod to ‘clinician burn-out’.

Democratic reasoning

Another putative value of the LLM is democratising ‘reasoning’. I’m deeply skeptical about this. Aren’t you? We’ve recently seen examples of ‘democratic reasoning’.

I come from one of the few countries that got reasoning about COVID-19 pretty much correct,⌘ and our Prime Minister effectively saved the lives of 1:250 of her citizens, based on the advice of true experts. She was then effectively driven from office through the persistent harassment of ‘reasoning’ at the level of the lowest common denominator.

Democracies need smart, savvy experts so they can flourish when they come up against problems that demand expert—not popular—responses. Similarly, doing good medicine consistently depends on having an integrated model of anatomy, physiology, pathophysiology, pharmacology (including pharmacokinetics and pharmacodynamics), combined with understanding people and being able to communicate effectively, among other skills. This requires expertise at joined-up thinking.

LLMs have none of this. Instead, they have an integrated model of language. If you “train them on Medicine” then they have an integrated knowledge of medical language as it is used in the medical literature. Often, the responses LLMs generate will concur with our understanding, but a non-expert will struggle to see when the LLM is simply filling in gaps plausibly—confabulating. The LLM will mislead, especially when actual reasoning about a model is needed.

Worse still, we will use them inappropriately. Young clinicians will believe that the LLM is more knowledgeable, and will defer; they will fail to mature their model, and the consequences will be devastating in the long term. We will lose our experts.

An LLM reads the Literature

Recently, I wrote an eight-part series on defects in the medical literature.⌘⌘ We discovered the current role of AI in the medical literature is to make things far, far worse.⌘ This runs even deeper. We know that at least several percent of the ‘archival medical literature’ is defective, often through intent. During our exploration, we also found that a lot of this is difficult to diagnose. You need to be expert at image forensics, medicine and statistics to work out where some of the defects are. Enter the sycophantic and trusting bot. Enough said.

Of course LLMs can be useful

LLMs are associative tools. They can help by presenting known patterns, where these patterns have been codified in language. They are pretty good at doing this with precisely coded language: computer languages and maths.

On the other hand, is extremely unwise to trust LLMs (and their accoutrements) to do important tasks. They introduce difficult-to-find flaws, and gloss these over with plausible language, like a politician.

A far better use for LLMs is to check your work. Write the computer program, and then get the LLM to check it. A clinician makes a list of differential diagnoses with their likelihoods, and then uses the LLM to check they haven’t left out something important. They write the prescription, and the LLM then cross checks this for important interactions and against the patient’s known conditions. Write your piece for Substack, and then get the LLM to proofread and fact check it. Just don’t trust the LLM.

That is not how LLMs will be used

One estimate puts the amount of AI-generated code in 2026 at 41%. But, as large corporations are just starting to discover, firing lots of staff and then imagining that LLMs will do their jobs is a recipe for tanking your company. For example the Swedish fintech giant Klarna eliminated about 700 positions in 2022–2024, replacing them with AIs. By mid-2025, complaints piled up to the point where they started rehiring.

LLMs either can’t do the work (in most companies) or will make things more complex, increasing the burden on staff or inappropriately deskilling them (‘cognitive debt’). Recently Business Insider reported that Amazon lost millions of orders related to snafus by its AI coding tool. You’ve likely heard of the AI tool that wiped out a software company’s production database, and then apologised.

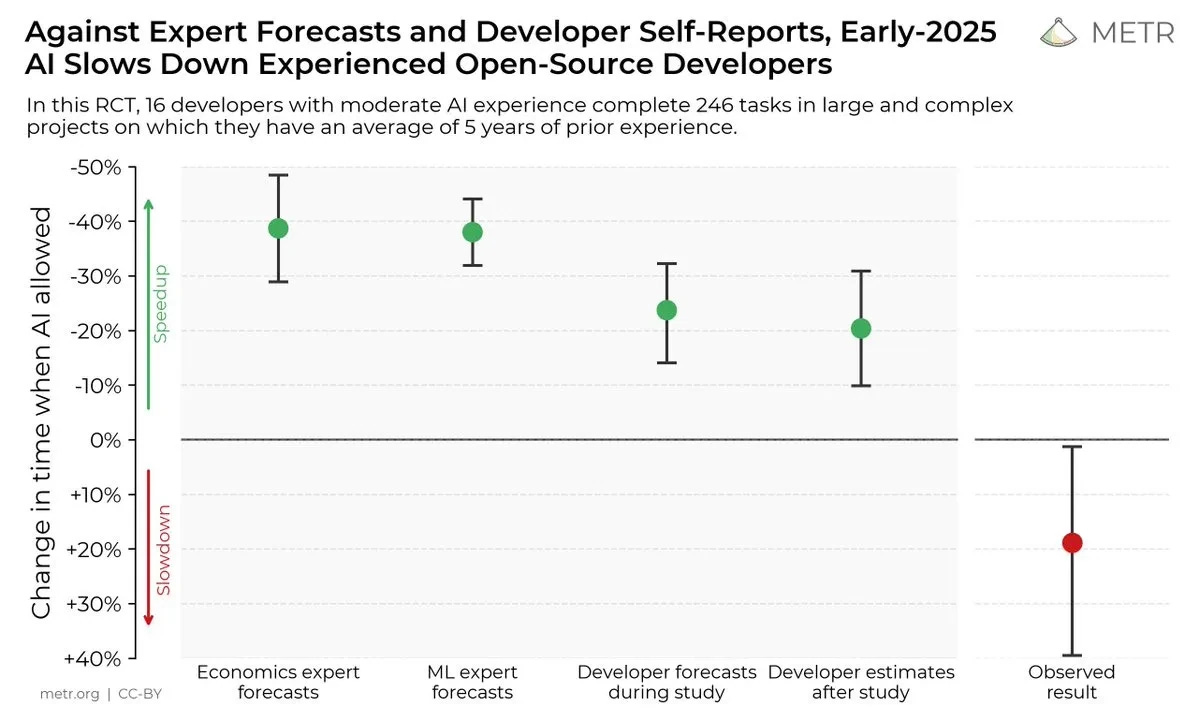

We’re all familiar with the 2025 MIT study that showed 95% of organisations investing in AI are getting zero return. METR’s randomised trial in 2025 (graphic above) showed that competent coders working on their own open-source repos were slowed by 19% when using AI, in contrast to their own and others’ assessments. Current AI agents fail dismally at realistic benchmarks like APEX-Agents. In late 2025, AI agents managed to complete 2.5% or fewer paid, remote jobs.

According to the Wall Street Journal of 11 March 2026, an analysis of digital work activity by 164,000 people showed that after AI deployment, workers spent more time on email, messaging and chats; their use of business management tools doubled. But they spent less time on focused, uninterrupted work. People end up working harder. “Saturday and Sunday productive hours” rose about 50%.

Dumping LLMs on doctors and asking them to work harder is going to happen. I also see that in 2025, US hospitals discovered they could use AI for more aggressive billing, to the tune of over $2 billion in Blue Cross Blue Shield claims. But conversely, insurers can save nearly 10% on their payouts through aggressive AI-based ‘claims management’. Anticipate unproductive bot wars.

And yeah, we all know that the Pentagon has just booted Anthropic because it won’t allow bots to (a) perform mass surveillance of US citizens; and (b) kill people with full autonomy. Like Iranian schoolchildren. This is the climate in which we’re contemplating “sensible use of AI”. Dream on.

LLMs are a little silhouetto of a man. Are you sure you want to fandango?

My 2c, Dr Jo.

⌘ This symbol is used to indicate posts where I’ve discussed the flagged topic in more detail.

For the first image, Gemini Nanobanana struggled to remove the God from Michaelangelo’s Creation of Adam but gave me a cool robot God after I’d excised him manually. I then layered the robot back over the image.

Despite my putting the machine option first—people tend to pick the first element in a list.

We’ve even built a relational model.⌘

Of course, to fix human health, we need to achieve the impossible task of getting politicians onside with limiting the flow of harmful commodities into communities,⌘ and promoting the flow of health-giving commodities into communities.

Of note is that in answer to “The tool is suited for the documentation needs of my speciality”, the average answer was 3.6/5, hardly a resounding success.

Not necessarily their money, though.

This does naturally invite the question “How many of those people are actually thinking?” This sort of speculation can become quite depressing.

Until the next, eye-wateringly expensive version.

This is obvious once you realise that the shroud can’t do the LLM’s job—or we wouldn’t need the LLM. New facts are jury rigged on, rather than being “integrated into the model”.

With arrows going every which way.

I asked an experienced clinician, who is also deeply involved with the current roll-out of an LLM scribe in EDs throughout our country, to peer review this post. They commented “What I’ve found is you have to think differently to get the same effect. I write notes to help me think. With the scribe I have not done the thinking; now when I’m reviewing the notes, I find myself having to write and think before going back to fix the scribe’s output. I suspect many people don’t bother.”

The trouble is, AI is already inserted into our health systems - and is getting it wrong. I recently had an automated text from my GP, telling me that my cholesterol level is high and they are putting me on statins. This was wrong on two counts - I don't have high cholesterol; it's not even borderline and there should be a big red flag on my notes telling the doctor that I am extremely intolerant of statins - hospital admission intolerant.

I have both the knowledge and agency to refute the suggestions in the text - but what about those who accept it at face value?

A,B,C. Accept nothing, Believe nothing, Check everything?