Fixing the Journals

(Part 8/8)

Imagine you could profit from a just a tiny component (say 0.4%) of pretty much every mouthful of food eaten around the world. A tiny component that is needed but also excessive and harmful. How rich would you become?

Filthy rich, as it turns out. I’m talking about salt. Most of us will die from non-communicable diseases, and among these the number one killer is high blood pressure (HBP). It is mainly caused by the juxtaposition of two things—taking in over ten times as much salt as we need, and not eating our vegetables.1 A harmful excess that wastes human lives, and diminishes our quality of life.

We’ve previously worked out⌘ that ‘health care practitioners’ are largely powerless against non-communicable diseases. These are largely due to the flow of commodities into communities, and powerful forces govern this flow. Forces that have the ear of the regulators who can change things. With salt, they operate largely unseen, tainting research by de-emphasising salt.2

What is high blood pressure, really?

But there’s another group that ultimately profits too. Pharmaceutical companies get rich from treating high blood pressure. And this is necessary. We have excellent evidence that if your usual blood pressure is 160/100 or above, you are at extreme risk of all of the nasty complications of HBP: stroke, heart attacks, peripheral vascular disease, heart failure, kidney failure and ruptured blood vessels. And, of course, death. You absolutely need pharmaceutical management.3 It’s also reasonable to treat usual blood pressures down to 140/90. Below that, the risk/benefit ratio becomes murky.

But what if we take the threshold down below 140/90 anyway? Say down to 130/80? We immediately medicalise tens of millions of ‘patients’ in the US alone. This is pure profit for the pharmaceutical industry. A potent motive for what’s happened over the past several decades.

The first attempt came with “JNC 7” in 2003. In this document, with powerful influence from industry, the authors tried to define a new disease, ‘prehypertension’. Defining a new disease rather invites treatment. Yep their threshold was 130/80. But like Purdue with the ‘safety’ of opiates, they needed ‘evidence.’ Soon enough, I picked up a copy of the New England Journal of Medicine (NEJM). It featured a very weird study: TROPHY.

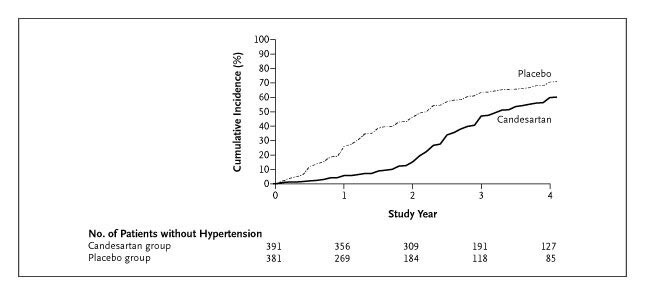

Premised on strange rat experiments, the highly conflicted authors4 took two groups of people with ‘prehypertension’ (that word again) and randomised them. One group of 400 got placebo throughout the four years of the study, the other 409 received blood pressure treatment (candesartan) for two years—and then stopped this, also taking placebo for the remaining two years! They theorised that if the graphs diverged at the end, this was a powerful argument in favour of treating ‘prehypertension’. Get in early.

A bit of personal pain

When I picked up that NEJM issue in 2006, I’d just spent two days on a course that emphasised natural variation in processes over time. We’ve met this concept before⌘— ‘common cause variation’. Things vary. It was immediately clear to me that the ‘positive’ findings of the TROPHY study were due to this sort of variation. So I set out to correct the error.

The thesis was that ‘pre-treatment’ would lower the risk of developing HBP in those two subsequent years. There were several problems. Their sample was on the borderline of HBP anyway; they had lax criteria for “diagnosing HBP” given the 18 measurements they’d make; common-cause variation would result in spurious diagnosis of “new HBP”. Their experimental set up guarantees a positive result!

I worked this out in Excel (duplicating their graph), did some quick internal peer review with three experts I happen to know, and sent a letter off to the NEJM within the paltry 3 weeks they allow. They did not publish it.5 Being a cocky individual, I asked why, and the editors replied that my point was “covered in the correspondence”.

It wasn’t, so I hauled up the big guns. My friend Prof. Martin Turner (then at the University of Sydney) just happened to be expert in simulation using Matlab and Simulink, with a specific interest in HBP. We put together a paper that used data from a Canadian study of 20,000 people, and confirmed that the TROPHY results do NOT indicate a lasting effect of candesartan. We submitted it to the British Medical Journal. In our covering letter, we were open about the fact that NEJM was not receptive to criticism.

The BMJ editor sent the paper out for peer review. It passed. And then something very strange happened. The BMJ is pretty transparent about its editorial process. You can watch the progress of your paper. Ours sat on the editor’s desk for ten weeks after acceptance, and then we received the bad news: it had been rejected!6

Several other journals sent the paper back with no more than a desultory glance. Ultimately, we published our paper in the American Journal of Hypertension, accompanied by an editorial and another group who identified similar problems with TROPHY.

One cheer for success

Refuting rubbish is unrewarding, to put it mildly. We spent perhaps 1000 hours working on our refutation. The TROPHY authors never even bothered to respond. On Google Scholar our paper is cited 44 times. The NEJM still features the TROPHY paper. It’s been cited 1387 times, often with enthusiasm, as if it were true.

I doubt we had any influence, but ultimately the hypertension industry abandoned trying to punt ‘prehypertension’. Instead, they simply re-defined ‘high blood pressure’ to—you guessed it—130/80.7 Aided by yet another defective study in the NEJM (SPRINT).

We’ve already garnered evidence that the journal publishing system is broken. The above is just a small, personal vignette. But it motivated me to ask about solutions.

Fixing Science

Future progress in science is predicated on good research, good publishing and robust refutation. We seem to be moving in the wrong direction. Predatory journals⌘ have weakened the foundations. Papermills⌘ have drowned many journals in trash. Individual scientists have compromised whole areas of research with photoshopped images and dodgy data.⌘ Drug companies have relentlessly pursued profit, even to the extent of serious misrepresentations of the science.⌘ There are other malign players. We’ve seen how AI has been co-opted to generate vast volumes of cruft, sometimes with hilarious results.⌘

So how do we fix this? I’d suggest that the primary problem is money.

Money talks

There’s an enormous amount of money sloshing around in scientific research. A large part—perhaps the greater part—is wasted, dissipated on research that is irrelevant, trivial or even fake. The taxpayer usually foots the bill. The allocation of grants is often ill-considered and may even be based on fraud. Influence from industry adds big biases. Research is often poorly checked, uneven and inconsistent, and difficult to replicate. For-profit entities make huge profits based on volume and headlines, rather than on quality.

But where does this come from? Why is money misspent? We know the answer already. In past posts, we worked out how this happens:

If we set targets, we break things (Goodhart’s law⌘)

Most of the variation in a system is out of the hands of those who work there. We need to fix the processes.

If we’re going to measure, we need to understand the processes and measure appropriately over time.⌘ Walter Shewhart showed us how in 1924.

A good way to start fixing things is to understand and apply Deming’s 14 points.⌘

How does this all apply to the current chaos in publishing? Let’s see ...

A fix

In a brilliant, recent article on For Better Science, Csaba Szabo first describes how broken the current system is, and then his fix. He points out that the primary problem is the one we’ve identified: academic institutions targeting volumes and neglecting quality. Targets v Goodhart, again. His solution is radical, and impressive. He wants us to rebuild from the ground up:

Funders should claw back control, establishing mechanisms of data dissemination and publication.

As part of this, funders must ensure quality—they are now partners in research.

All data must be open at completion of a substantial project, together with the abstract and manuscript (e.g. on PubMed).

Open ‘peer review’ then takes place, both from the scientific community and by funder-appointed panels.

Independent replication studies must be part of the process: encouraged, funded, posted.

Research that is not up to scratch results in removal of future grant support.

It’s attractive to work out the consequences of this approach. For-profit journals and all of their supporting infrastructure will be emasculated—the motivation for the provocative image at the start of my post (from PDSA UK). There will be fewer opportunities for both individuals and companies to game the system through bad study design, poor execution, and misrepresentation of results. The money flow will be largely controlled.

The problem with this proposal is that it’s going to take decades for everyone to come around. Universities are notoriously slow to understand change, let alone grasp it. The Big Journals will scream like castrated animals and resist any change to their livelihood. Fraudsters currently making billions will find innovative ways to impede progress. And as we’ve seen, the influence of industry can be profound. They know whom to talk to. We can even anticipate that, in coming decades, governments will become more corrupt, and the stranglehold of industry will tighten.

Attention

I think we need to build up a head of steam before we can move.8 A simple way is to make everyone more aware of the current problems. Dispel the illusions created by increasing volumes of trash, and headlines that deceive. It’s likely that as the current crop of billionaires tightens its stranglehold over economies around the world, people will become more receptive to the idea that they’re being robbed every which way. Especially as pseudoscience consistently fails to deliver, and the quality of existence worsens. People may become more open to good science.

If fake studies and bad science are routinely pilloried and taint the future career of their authors, this will have a salutary effect. But how can we change the course of the juggernaut, especially as individuals? We’re confronted by the Deming problem—that 94% of what we do is governed by the system. We have limited autonomy.

We need to use every bit of that autonomy for good. We need to use the affordances of the Internet to make sure that when someone looks up a study, they can with trivial ease read an expert criticism.

This won’t be easy. It’s tempting just to give up. But we need to try. We must try. How? I think we need the following:

A way to disseminate criticism of bad work—and to praise good work. We can do this. We have the Internet. It doesn’t have to be just porn, cat pictures and Facebook falsehoods.

Clear identification of both bad and good research.

Ever-increasing awareness of and participation in criticism of Science. This is what we lack. People will swallow glib falsehoods, provided they are presented in a superficial, appetising way. High-salt, pseudoscientific bonbons.

What we really need is to promote an understanding of Science.⌘ The most important part of this is closing the loop of solid criticism. If models are wrong or testing was badly done, we need to make sure everyone knows—so that clinging to falsehoods is shown for what it is. Conversely, good science must be lauded appropriately.

A lot of what we need is already out there!

Good, insightful criticism is already happening. But this is poorly co-ordinated, and often dissipated. There is criticism but very little goes further. And you saw how hard it is to refute nonsense like TROPHY.

But still we must try. Recently,⌘ we met Guillaume Cabanac’s Problematic Paper Screener. Although there are limitations to the approaches taken, this is still a valuable tool that lists rubbish and worrisome papers. Right at the start⌘ of the octet, we found other resources:

The magnificent PubPeer.

Lists of predatory journals.

Quackwatch for quackery.

Data Colada for fine detail.

For Better Science with its plethora of no-holds-barred criticism.

When we looked at papermills,⌘ we discovered more intrepid heroes: Elisabeth Bik, Smut Clyde, Jana Christopher, Jennifer Byrne and multiple anonymous experts at PubPeer. Our examination of h-hacking⌘ gave us another tool—examine citations for bad behaviour.

Throughout, we saw the importance of being able to read a paper well, whether we were dealing with maleficent or incompetent single players,⌘ or powerful companies⌘ perverting the market for profit. The big question is “How do we get this joined up and out there?”

I’m open to suggestions. I also have a few ideas of my own—but to date, they’ve been monumentally unsuccessful. They’re also quite technical.

So in my new-year’s post, I’ll give you the reader a choice among three options. I’m quite looking forward to the new year. Are you?

My 2c, Dr Jo.

⌘ This symbol identifies another post of mine where I explore in detail. Click on the link.

This is the last post in the current series. The previous one was AI dabbles in Science.

There is abundant evidence of the relationship between high blood pressure and salt, especially with poor quality diets. Read up on the DASH diet.

You’ve likely never even heard of EuSalt or the now defunct* Salt Institute, yet they have been funding ‘research’ into salt for decades, just as the now defunct Tobacco Institute funded ‘research’ into tobacco. Prominent editors of journals devoted to high blood pressure have taken money from them. Entire research departments at universities are funded. Guess where hypertension ‘experts’ often originate?

*Its protagonists have simply retreated even further into the shadows.

You also need a competent clinician to ask “Is this secondary hypertension?” It’s quite correct that other measures like the DASH diet work, even for established high blood pressure, but if it’s up at 160/100, chances are you’ll need one or several drugs too. You’re not going to embrace the Kempner* rice diet, after all, nor would this be wise.

*And as an aside, he was nasty.

In his book Golden Holocaust, Robert Proctor asserts that one of main TROPHY researchers even took half a million dollars from the ‘Council for Tobacco Research’. A hired gun?

The NEJM can afford to be very selective, publishing just 1% of correspondence. They generally won’t rock the boat.

The BMJ mostly doesn’t “enter into correspondence”, but I managed to discover that the paper had been rejected by the hanging committee. That sounds ominous, but the hanging committee normally decides which issue the paper will appear in. Not whether to accept it. It seems they had three problems: they felt the paper should have been sent to the NEJM (despite my covering letter), they “didn’t like Fig 3”, and they felt that the BMJ audience was not smart enough to understand the paper! My guess is that they simply didn’t want to stir things up. Other less charitable explanations are possible.

With lots of caveats that seem reasonable but ultimately guarantee that tens of millions of people will be over-treated with no overall benefit in the harm/benefit equation. And harm, in older people.

To change the metaphor a bit :)

As a retiree, I despair when I see how things are going.

People want simple, which is why we go for things like h-index, which then itself, gets hacked.

Create something simple that people will follow, then people will see how they can exploit and circumvent what started as a an attempt to improve simplicity and honesty.

In another publication that begins with Q, there is a group of people that fetishizes IQ, and another AI, without actually considering actual intelligence. These simple measures of something that started out as helpful become idols that distract us from the valuable.

For a while, before we had a dictator and his collaborators who decided to burn down U.S. government and cripple science there, we thought we had most of what we needed: strong funding for real science, albeit somewhat corrupted by corporations with their own profit agenda.

Unfortunately, it takes intelligent, educated people to appreciate what real science is.

I confess that before I took classes in statistics and bioinformatics, I didn't realize what p-value was about, and I think I am pretty well read in scientific subjects. Yet understanding statistics and p-value is really near the heart of scientific method. People with average education don't understand this - certainly not the average voter, and likely not most politicians.

What we have now is something that will take decades to repair, a period longer than I personally can expect.

Thanks for the link to your paper Dr. Jo. I looked it up and sadly, even though having been published in 2008 it is absolutely ancient in terms of medical science, it is still behind a pretty hefty paywall (43 USD or 39 Euros)!

And that is another topic you could write about with regard to medical journals -- access fees! They are outrageous! The brave young lady who established Sci-Hub (who is Ukranian, I think) has been trying desperately to fight this by providing medical literature for free. But as you can imagine, the wealthy publishing power-brokers and gatekeepers of medical knowledge have been fighting her for years. I imagine they -- Elsevier, Wolters Kluwer, Oxford, Wiley, Springer Nature, et al -- have been doing their best to sue the pants off her.

I have worked as a writer or editor for a couple of the above-referenced publishers as well as a few lesser-known, but powerful US-based companies over the last 30 years in my career as a medical writer and editor. I can tell you they are all greedy. They make massive profits but believe me, those profits do not filter down to the rank-and-file content producers like me.

But hey, at least they stopped accepting ghost-written articles! I did that too, for a few years for ADIS international (which is headquartered in Auckland!) I wrote papers that were published in cardiology journals (mostly) and some big-name KOL (key opinion leader) put his/her name on it and all he/she had to do was review it, make a few corrections maybe, and get paid! My name only ever appeared in the acknowledgment section where I was thanked for my "editorial services," making it sound like I just proofread the thing instead of actually wrote it. I should provide the disclaimer that what I wrote were review articles, only, not original research!

At any rate, journals now forbid ghostwriting. Anyone who contributed to the content must now be named in the author line. That is a positive step. An even more positive step would be for them to actually financially reward their in-house writers and editors!!